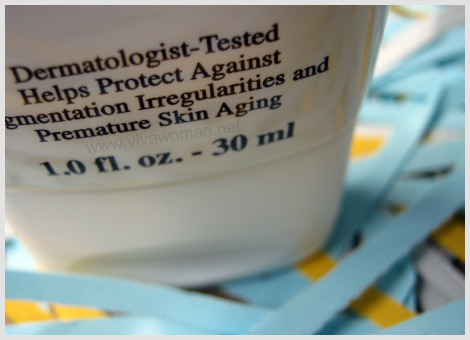

Dermatologist-tested products: do you care?

Are you more inclined to buying a skincare product if the phrase “dermatologist-tested” is indicated on the packaging? Do you perceive the product to be better because of the association with skincare experts?

For as long as I can remember, I don’t think these words meant anything to me. At least, they’re never the first to influence my purchasing decision. I might take note of it, but I wouldn’t buy the product because it is tested by some dermatologists. Anyway, I have no idea if it refers to one or a group of dermatologists and who are they. Perhaps the phrase “clinically tested” or “laboratory tested” have more effects, but again, I do note that most of these tests are done by the company and are not independent or unbiased. Most often than not, there are fine prints indicating that the results will vary with individuals.

So what’s your say?

Comments

Leave a Reply

You must be logged in to post a comment.

they do look a bit more credible to me than others without the label, but i wouldn’t make a purchasing decision juz cuz of that. i still rather use what’s suitable for my skin after a road test, derma tested or not

Hmmm, actually I am not too sure what dermatologist tested means. I also don’t really take that into account. I am more influenced by No Preservatives, No allergens, and words like these, because they save me time scrutinising the ingredients. haha.

i dont really take it into consideration, afterall, dermatologist tested to me usually is linked to non comedogenic or non-irritation which still does take place despite these claims.

There is some association at the perception level between dermatologist-tested products with some level of efficacy although true or not, I guess it still depends on the suitability on the user.

Same here! When I see products listing those, I tend to pick it up to check.

Ah…that’s an interesting point. Seems that dermatologist-tested also usually appears with words like hypoallergenic.

I keep a lookout on the products I’m interested to get, but not something that I insist. What about Pharmaceutical-tested products? It sounds more rigid.

Why are things so complicated these days? Sigh…

I am constantly amazed at how careless I am when it comes to my purchases! I never bother with such details, Sesame….I should start.

After years of using skincare products, I only trust my own skin nowadays. If my skin is sensitive to the product, there is no point in whatever the manufacturer says.

I remember from my biology teacher that it is indeed a ‘stupid phrase’ and could mean it has been tested on 1 person. Since then I don’t really care about this phrase, cause things always react differently on each skin as well. ?

“Dermatologically tested” doesn’t mean anything to me! Most products that are tested, make me break-out anyway. Even those that are hypoallergenic!!! I’m now smart enough only to use all natural products and guess what? My skin has never looked better. I used to buy expensive creams for my sensitive skin, only to find out that after 2 weeks of use, I developed some kind of a reaction to it. Now, I just use Jojoba or Grapeseed Oil. My skin looks good and so does my bank-account, haha!

They sound the same to me. I guess from the manufacturer’s standpoint, adding claims like this can increase credibility.

I don’t think you need to be bothered much. Like the rest mentioned, it doesn’t make much of a difference.

Good point! I would always pick up products that I think work for me and if they have such claims, maybe it lends some weight…

Your Biology teacher is right! We don’t know if it’s one or a group…most of the time it’s one and I’m not sure who and even if I do, I’m uncertain if I can trust his/her opinion. Like you said, the product will work differently on different skin type.

Yeah, those words don’t mean much…especially hypoallergenic because I noticed some products stating so contains questionable ingredients.

I’m glad you found something that worked for you. Between Jojoba and Grapeseed, I like Grapeseed better. Jojoba is too oily for me.

omg, I totally agree with everyone else. The only thing you can trust is your own skin. I keep thinking I have sensitive skin, because even when I use all sorts of claimed-to-be friendly products, they just don’t work. I didn’t know so many people are facing the same problem.

For me, it really means nothing unless they can guarantee I won’t break out. And thats pretty much impossible to tell.

It means go read the reviews on MUA for it to me :P.

All of it is nonsense. “Not sold in stores!” is another annoying one.

Hi Sesame,

I keep looking a your blog very ofte, though it is the first time to comment…I must say..you have an amazing blog.

Coming to the topic….my teenage cousin buys them if they are dermitology tested but I dont and am more worried about the ingredients. So I guess they have thier own markets.

Also did you happen to look at mountainroseherbs.com…they are an amazing all organic store

Oh buy, I definitely can insert my 2 cents on this one. A while ago I did a little test just for myself, when I pretended to be a consumer and I’ve wrote an email to 3 companies that claimed something like that “dermatologist tested” or “pediatrician approved” or “award winning” or “doctor recommended” and asked for more information as well as the names of the dermatologists, pediatricians and such who “approved” their products, for verification purposes. It’s been about 6 months since – I am still waiting for their reply. None of the companies responded. None. So having said that – all these claims are bogus. They don’t mean anything. If I mail a sample to dermatologist or pediatrician, I have full right to claim that it’s been tested. What does that mean? Nothing. Does it make my product better in any way? I don’t think so. As for “laboratory tested” – all products have to be tested for stability and those words themselves don’t add anything to the products. “Clinical studies” blah, blah, blah…good luck tracking them down or the actual people participating in them. That could be fun. “Hypoallergenic” is a good catchy word, but I have to say that it also doesn’t mean anything. Some people’s skin still can react on the most natural ingredients. And you will not know it till you try the product. I also have a word to say about products that claim that they “don’t have preservatives”. Be careful of those, unless you know that you will use it up within a month or so. Oil based products are fine for the most part and often don’t require preservatives. But if water based product has that claim (creams, shampoos, toners, read the label to see if there is water) those have to have preservative of some type, or it will grow bacteria shortly after production. Trust me, bacteria can kill you – preservatives can’t. These days more and more new “catch claims” are appearing every day to get you sold on a new miracle. Just do your own research vs. just believing the label. OK, that was a bit long, but at least it’s off my chest ?

Sesame is spot on, these words shouldn’t be part of your purchasing decision especially if you’re looking to address certain skin concerns.

This industry is so lucrative, some brands tunr to cheating in this way.

Dermatologist do not usually test commercial products, they see patients. There are only a few conglomerate names that genuinely enlist well known dermatologists to assist them with testing and these are usually the neutrogenas, aveenos, galdermas (who produces cetaphil) etc of this industry.

Brands that are not part of a larger established group do not have the funds to go about having derms ‘testing’ their products.

They simply say that they do so on the packaging, it isn’t illegal but it probably isn’t ethical either and this trick seldom works for long because consumers figure it out quickly enough.

I like to look at the ingredient’s list, especially the first 3 items. These make up between 50-90% of the product that comes into contact with my skin.

You know, before I had developed acne-prone skin, everything worked for me but now, I’m so scared to try new products. I remember a mineral makeup breaking me out big time even though it is supposed to be more skin friendly!

Haha…that’s truly impossible.

Not sold in stores? I haven’t seen this one or maybe I haven’t paid attention. What does it mean? That the product is more exclusive?

Oh yes, mountainroseherbs has some good products there but I used to join sprees to purchase from garden of wisdom instead cos the shipping for the former to Singapore is quite expensive I was told.

That’s interesting…that you wrote to 3 companies and none replied! Actually, I was reading Dr Ellen Marmur’s Simple Skin Beauty and she’s a dermatologist and yet she also said these phrases – dermatologist-tested and hypoallergeneric – mean nothing.

And then there are those marketing buzzwords – yesterday just came across a new product that claims “take you beyond white to aura bright”. Whatever that means!

I have also met dermatologists who are not able to answer some of my skin concerns as thoroughly as I expect…so they’re human and they can be bias. Even if they’ve tested, it doesn’t guarantee anything.

Chris, I apologise I have not replied to your email. I’ll still mulling over it (ya…long time) cos of my current skin issues.

no worries, it’s no rush really. But i’m curious, may I ask what your skin concerns are? You seem very diligent when it comes to what you do for your skin.

I have recurring acne issues – most likely an internal problem but I’m more careful about what I use externally too. Yes, I’m pretty diligent but acne is pretty annoying…and persistent too.

All that metters to me is the ingredients list. I do not care about the other claims. The ingredients say it all. For me it is especially potent if it contains organic ingredients from a good source, and processed/packaged in the most ethical way.

Wow, “take you beyond white to aura bright”? That’s definitely creative, I have to give that. It’s just I personally don’t know anyone who actually have seen an aura. It’s invisible for most of us that I know. So the message of the product would translate into “buy our product and you will become so bright that you will be invisible to most of people” hahaha….genius.

Haha…becoming invisible indeed! Or everyone else has to wear sunglasses around this person with aura brightness!

For me, these labels are very important because it is your lawsuit protection agaisnt these companies unless something bad happens to you when you use these products. Just a simple “dermatologists approved” or “clinically approved” can help you win a case against them. Also, as an advertising student, I know very well not to trust these “X tested” products. Yes, they are tested, but are they approved? Advertisers can get away with that.. Letting people think their products are legit, but in reality, these products arent approved. People tend to take labels for granted, but they don’t know how big its effects are.

This sounds great for someone with mild acne. I’m using what is suitable for my skin. I have sensitive skin. Thank you for sharing!